Written by Leon Godwin, Principle Cloud Evangelist

As organisations rapidly accelerate their AI adoption, the democratisation of data has become a massive competitive advantage. However, this broader access to data is also increasing your exposure to security incidents, insider threats, and uncontrolled data sharing. If left unmitigated, these risks can quickly undermine organisational trust and slow down your pace of innovation.

Integrating Fabric and Purview to close the gap

Historically, data management was characterised by fragmented silos, which created an impressive governance gap. You’ve likely heard the reflection that “AI is only as good as your data”. If your underlying data estate is not secure, compliant, and governed, your AI initiatives will inevitably falter. According to a recent Microsoft Security Blog, a staggering 86% of organisations lack visibility into their AI data flows, operating completely in the dark regarding the specific information their employees are sharing with AI systems. Furthermore, 67% of executives remain uncomfortable utilising data for AI initiatives due to persistent quality and security concerns.

Today, Microsoft Fabric solves these systemic inefficiencies by unifying your data estate with a data moderisation platform.

By integrating Microsoft Fabric with Microsoft Purview, organisations can finally close the AI security gap. This powerful combination allows data and AI leaders to enforce a “secure by default” environment, applying fine-grained access controls, mitigating insider risks, and preventing the oversharing of sensitive information in AI prompts. Ultimately, unified data governance is no longer just a regulatory checkbox. Data governance is now the key ingredient to innovation without compromising on security.

Data governance implementation

To successfully implement this unified data governance strategy, organisations must move beyond theory and leverage the native capabilities built into Microsoft Fabric and Microsoft Purview. Here are the concrete features and operational steps you should prioritise:

- Universal OneLake Security: Transition away from configuring security in every individual AI model or report. Fabric’s OneLake allows you to configure Object-Level Security (OLS), Row-Level Security (RLS), and Column-Level Security (CLS) directly at the data source. This “define once, enforce everywhere” approach ensures that however a user sends a query the exact same permissions apply natively.

- Data Loss Prevention (DLP) and Sensitivity Labels: Deep integration with Microsoft Purview allows you to apply sensitivity labels that persist as data flows from the lakehouse all the way down to exported Office files. Fabric also supports DLP policies that can automatically detect sensitive data, trigger policy tips, and restrict access to structured data in warehouses and databases to prevent data oversharing.

- Federated Governance via Domains: Avoid the bottleneck of a strictly centralised IT team by grouping your data into logical “Domains” (e.g., Finance, HR, Marketing). This allows you to delegate domain-specific governance and administration to the respective business units while still maintaining tenant-wide security guardrails.

- Insider Risk Management (IRM): Protect your intellectual property by leveraging IRM indicators for Fabric. These tools monitor user activity within the Lakehouse to detect and alert you to risky behaviours, such as the mass exporting of sensitive reports or potential data exfiltration.

- Lineage, Impact Analysis, and Endorsement: Establish trust in your data estate. Fabric provides visual data lineage to track the flow of data from source to destination, helping you answer “what breaks if I change this data?”. Pair this with the “Endorsement” feature to clearly label trustworthy, certified data items, guiding business users to the right source of truth.

- Network Security: Move beyond public internet access. Secure your environment by implementing Private Links to ensure traffic between your infrastructure and Fabric routes over the secure Microsoft global network, and utilise customer-managed keys to encrypt sensitive data at rest.

Ultimately, Microsoft Fabric represents a significant shift in the data platform landscape, offering the potential to eliminate data sprawl and simplify governance. However, simply having access to these native capabilities is not enough. Transitioning to this unified environment requires a commitment to “governance by design” by treating security, compliance, and quality as foundational elements rather than afterthoughts. From automated access restrictions in OneLake to advanced insider risk detection, Microsoft Fabric and Purview work together to ensure that protection is built-in, consistent, and end-to-end. The true challenge for organisations lies in execution. Start by establishing the right strategic frameworks, a data culture, and planning a phased rollout that balances speed with architectural rigour.

Next Steps: Join our webinar

To help you navigate these challenges and confidently adopt Microsoft Fabric, I am thrilled to announce that I will be co-hosting an exclusive 60-minute webinar alongside Rana Kamel, Cloud and Data Solution Architect at Microsoft.

Event Details:

- Webinar: Mastering Security & Governance in Microsoft Fabric

- Date: Wednesday, 10th June 2026

- Time: 10:00 – 11:00 (Online)

- Speakers: Rana Kamel (Microsoft) & Leon Godwin (Cloud Direct)

Don’t let security concerns hold back your AI and data transformation. Sign-up today to save the date in your diary.

You’re probably aware of Microsoft Fabric. But if you’re unclear whether it adds real value to your data analytics, you’re not alone. There’s some confusion around the relationship between Power BI and Microsoft Fabric. Here, we address common misconceptions, explain how Fabric provides a unified data platform and provide the opportunity to evaluate it at a consultant-led workshop.

Power BI–Fabric confusions

Microsoft Fabric is described as a ‘unified analytics platform’ covering data engineering, data pipelines, data warehousing, data science, real-time analytics, and Power BI. While it is all those things, and more, it’s this positioning that may be the cause of three common misconceptions:

- Fabric is the ‘new Power BI’

- Fabric is replacing Power BI

- You need Fabric to use Power BI.

Misconception 1: Fabric is the ‘new Power BI’

Microsoft Fabric isn’t the new Power BI, but it does incorporate it into an end-to-end data platform. Power BI remains the place where users consume insights, while Fabric handles everything that happens before that data reaches a dashboard.

Misconception 2: Fabric is replacing Power BI

PowerBI will remain to be the integral analytics and visualisation interface within the Microsoft portfolio. Alongside this Fabric will expand the backend data capabilities and provide greater integration, meaning PowerBI users will still utilise the same day-to-day interface.

Misconception 3: You need Fabric to use Power BI

If you’re using Power BI with Pro (ie standard) or Premium Per User licences, you can still use Power BI standalone. You don’t need Fabric to build reports, share dashboards, and run most BI workloads. It’s only at the capacity-based Power BI Premium licensing level that things start to overlap with Fabric.

Like many other organisations, you may be using Power BI for reporting, Azure Data Factory for pipelines, and Synapse as your data platform.

Fabric now brings all these capabilities together, so there’s also a misconception that this ‘new thing replaces old thing’. In fact, all this functionality lives on within Fabric, and is augmented.

In reality:

- Microsoft Fabric = a complete end-to-end data platform, and

- Power BI = the analytics, visualisation, and reporting within it.

Licensing: is Fabric essentially an upsell?

The short answer is no. While Microsoft Fabric does require separate licensing, for IT leaders it’s much more nuanced than ‘another platform, another bill’. On the face of it, Fabric looks like an additional platform: new name (Fabric), new portal, and new capabilities. So, it seems like a net-new purchase decision. With the added unpredictability of capacity pricing.

But it also represents an opportunity to consolidate and rationalise:

- replacing multiple Azure services

- unifying data architecture

- reducing integration overhead, and

- simplifying governance.

Licensing in a nutshell: Power BI is typically licensed on a per user basis through Power BI Pro or Power BI Premium User (PPU) subscriptions. Although some organisations are utilising capacity-based Power BI Premium licensing. Fabric licensing is based on capacity units (of compute + storage + workloads) which power data engineering, and all other functions in Fabric including Power BI workloads. This is a key point – Power BI can run on Fabric capacity.

It also reflects the broader shift from the old world of separate tools (here Power BI, Synapse and Azure Data Factory) to the new world. Fabric provides a single, unified data platform that provides:

- one storage layer (OneLake)

- one compute model

- one governance layer

- one billing model.

Using Power BI, but not yet Fabric? What value does it add?

If your organisation isn’t using Fabric, you may be asking:

- What problem does it solve for us?

- Do we need this now, or can we wait?

In answering these questions, there are two things to consider. First, ask yourself ‘Do we just need reporting, or do we need a full data platform?’

Use Power BI alone:

- When you need dashboards and reporting

- If data sources are already prepared, and

- You don’t need complex data engineering.

Adopt Fabric if you need:

- An end-to-end data pipeline

- Want a unified data platform

- To modernise your data estate, and/or

- To prepare for AI and advanced analytics.

Which brings us to your second consideration: your AI strategy.

Fabric helps organisations create a governed, reusable data foundation that’s essential for effective AI. The real value isn’t just ‘doing AI’, it’s ensuring data is trustworthy, shared, and consistently governed. Those foundations are where most organisations struggle, and they’re not something Power BI alone can solve.

Added to this Fabric will provide a host of data benefits, such as data mirroring. Instead of manually transferring or duplicating data into Power BI, Fabric can mirror data from external sources directly into OneLake. This keeps data automatically in sync, reduces engineering effort, and ensures reporting stays up to date without any manual movement of data.

Learn more about Fabric

Organisations don’t always get the opportunity for hands-on learning to better understand the complexities of these platforms and their possibilities. That’s why we have started to deliver a programme of consultant-led workshops, produced alongside Microsoft.

The next of these free, one-day, online workshops is on Wednesday 27 May. Learn more and register.

‘Fabric Analyst in a Day’ is suitable for those familiar with Power BI data analysis, but new to Fabric. It is intermediate-level and will show you Fabric’s end-to-end analytics capabilities in practice: from data ingestion to report creation, with automatic refresh.

By the end of the workshop attendees will understand:

- If and how Fabric can add value to their organisation

- The productivity gains possible with Fabric’s integrated analytics capabilities

- How to unify and transform multi-source data with Shortcuts, Dataflows Gen 2 and Pipelines.

Fabric is not just a product add-on; it’s a platform shift. It brings Power BI into a broader, end-to-end data platform. Power BI remains the layer where users consume insights, while Fabric handles everything that happens before that data reaches a dashboard. This means organisations can spend less time managing data and more time using it to make decisions.

AI agents might’ve started the year as a buzzword, but they’ve quickly become the modus operandi. They’re moving quickly, developing from early personal productivity tools to systems that are accessing data, taking actions, and operating across your Microsoft 365 environment and beyond.

It signals a huge shift in our ways of working, and in turn introduces new challenges for IT teams: how do you safely govern, secure, and scale agents as they become part of everyday work across departments, in teams, and even for individuals?

Microsoft is answering that question with the release of Agent 365 on 1 May. Rather than being another agent-building tool, Agent 365 is designed as a control plane that gives IT and Security leaders visibility and control of agents across their organisations.

With General Availability right around the corner, we’re here to answer what Agent 365 is (and isn’t), why it will matter to your business, and how your technology teams should approach it.

What is Microsoft Agent 365?

Microsoft Agent 365 is the governance and management layer for AI agents, operating within the Microsoft 365 tenant. Rather than creating agents, it makes them observable, governable, and secure once they exist.

As organisations move to adopt agents built through various tools, such as Copilot Studio and in some cases third-party platforms, IT teams face the familiar risks of limited visibility, inconsistent controls, unclear ownership, and mounting audit pressure. Agent 365 closes those gaps.

At its core, Agent 365 gives organisations a single place to discover agents, apply policy, monitor behaviour, and integrate agent activity into existing security and compliance controls. It extends the same core principles IT teams already apply to users, devices and workloads – identity, least privilege, auditing and the rest – to AI agents.

The aim of Agent 365 isn’t to slow innovation. It’s to accelerate safe adoption as agents move beyond pilots and into production.

How is Microsoft Agent 365 different to Copilot, Agent Builder, and Copilot Studio?

So far, where Agent 365 fits in among other Microsoft AI tools has proven to be a common source of confusion. In simple terms:

- Copilot and Agent Builder help people use agents

- Copilot Studio and Azure AI Foundry help people build agents

- Agent 365 helps IT teams govern agents

Agent 365 doesn’t replace Copilot, Copilot Studio or Foundry – it sits above them. You still use those tools to create agents, define workflows, or integrate systems. Agent 365 becomes the layer that ensures those agents are known, approved, and operating within agreed boundaries once deployed.

This distinction matters because agent development and adoption is no longer limited to IT teams. As employees build their own agents and departments deploy automations, governance needs to scale without reverting to manual controls or blocking progress. Agent 365 provides that shared operating layer.

What’s included at GA on 1 May, and what will evolve over time?

Microsoft Agent 365 becomes generally available on 1 May 2026, alongside Microsoft 365 E7. At launch, the emphasis is squarely on visibility, identity and governance, rather than on autonomous execution. You’ll be able to:

- Discover and catalogue agents operating across the tenant

- Apply consistent identity and access controls using Microsoft Entra

- Monitor agent activity through existing admin and security tooling

- Extend auditing, compliance and data protection policies to agents

But Agent 365 will continue to evolve beyond GA, and Microsoft has been clear that agent maturity will be incremental with additional capabilities emerging as customer patterns stabilise and governance models mature.

For IT teams, the important takeaway is not to wait for “full autonomy” before engaging with Agent 365, but to establish operational visibility and guardrails early, alongside adoption acceleration.

How does Agent 365 manage agent identity, access, and auditing?

One of the most significant aspects of Agent 365 is how it treats agents as first‑class identities within the Microsoft ecosystem. Using Microsoft Entra, agents can be brought into familiar identity and access models, enabling:

- Clear ownership and lifecycle management

- Least‑privilege access to data and services

- Consistent policy enforcement across users and agents

- Centralised auditing and activity tracking

This approach is critical as agents gain the ability to act on behalf of users or processes. Without an identitydriven model, organisations risk repeating shadow IT problems – this time with AI.

By integrating agent activity into the same security, compliance and monitoring platforms that teams already use, Agent 365 helps reduce fragmentation and operational overheads. This is where Agent 365 becomes a platform enabler, rather than an extra tool.

How is Agent 365 licensed, and who actually needs it?

Agent 365 is licensed per user, rather than per agent or organisation. The license applies to users who benefit from, or rely on, Agent 365 capabilities to govern and manage agents acting on their behalf, and is available:

- As a standalone add-on, or

- Included within the Microsoft 365 E7 SKU, alongside E5, Copilot, and Entra Suite

Not all your users will need Agent 365 on day one. For many organisations, adoption will start with IT admins, security teams and power users who are already deploying or overseeing agent use cases.

From a planning perspective, Agent 365 is designed to scale alongside your agent maturity, rather than penalise and inhibit early experimentation.

How should IT teams prepare for Agent 365?

Remember that Agent 365 is a solution to the challenges that IT teams are facing, not a new hurdle to overcome. The risk is ungoverned agents, and Agent 365 is the best possible way to tackle it.

As with onboarding any new tool, preparation is key. Important areas to focus on are:

- Understanding where agents are already being used (or tested)

- Aligning agent use with identity, data and security policies

- Clarifying ownership and approval models

- Treating agents as part of the broader Copilot and AI operating model

Agent 365 will be most valuable when it is introduced early, before agent sprawl sets in. This is an opportunity to shape how AI is operationalised, and balance innovation with responsibility rather than reacting after the fact. To find out more about how to implement Agent 365, reach out to one of our experts using the form below.

The speed of AI adoption has created a perfect storm for the modern organisation. While the rush to innovate with tools like Microsoft 365 Copilot and Azure AI Agents offers huge potential, the speed of this shift is frequently outpacing traditional security controls. In short, the traditional network perimeter is effectively obsolete.

Sensitive data no longer sits behind a firewall, but flows dynamically through AI prompts and generated outputs. Microsoft Purview’s Data Security Posture Management (DSPM) for AI represents a fundamental shift in strategy, moving security from the edge of the network directly to the data itself. To lead in the AI frontier, organisations must transition from reactive blocking to proactive governance.

It’s a big topic with lots to cover, so we’ve broken it down into seven key areas you need to explore to secure your organisation with Microsoft Purview.

Visibility and risk discovery with Purview AI Hub

You can’t protect what you can’t see. “Shadow AI” is rife as employees bypass official channels in favour of unmanaged tools. The Microsoft Purview AI Hub addresses this by providing a “single pane of glass” view of your entire AI ecosystem. It automatically discovers which AI applications are being utilised, identifies users interacting with sensitive data, and tracks the volume of sensitive information flowing through these models.

From a strategic perspective, visibility is not just a monitoring exercise – it’s also the essential first step that enables innovation. By providing real time insights into risk trends and user behaviour, the AI Hub allows security leaders to make informed, data-led decisions rather than resorting to blanket bans that stifle productivity.

The AI Hub provides a ‘single pane of glass’ and automatically discovers which AI apps are being used, identifies users sharing sensitive data, and highlights the total volume of sensitive info flowing through AI interactions. It’s about giving you the data to make informed decisions without needing to block every new tool.

Karim Fayad, Microsoft

Dynamic data protection and the power of sensitivity inheritance

Within Microsoft 365 Copilot, security is not a one-time event but a continuous lifecycle, powered by the inheritance of Sensitivity Labels. If Copilot accesses a document labelled “Highly Confidential” to generate a summary or a briefing, that output automatically inherits the “Highly Confidential” label. This ensures that the protection, including encryption and access restrictions, persists regardless of how the data is transformed by AI.

Furthermore, Data Loss Prevention (DLP) policies act as active guardrails. Purview can be configured to prevent Copilot from even processing items with specific labels if the policy forbids it. This dynamic safety net also extends to user interactions – if an employee attempts to share sensitive information, such as internal source code or credit card numbers, with a public AI model, Purview can warn the user or block the action entirely to ensure that the drive for efficiency never compromises data integrity.

Extending the security controls to third-party AI

Your security posture must account for the reality of a diverse digital estate. Most organisations do not operate solely within the Microsoft ecosystem – they utilise a variety of tools like ChatGPT and Claude as well. Purview DSPM extends its consistent safety net to over 100 third party Generative AI applications.

This is achieved by onboarding devices to Microsoft Purview and utilising browser extensions. This technical mechanism allows IT leaders to apply the same rigorous Endpoint DLP rules to third party sites as they do to internal Microsoft apps. By maintaining a unified policy framework across all AI interactions, organisations eliminate the security silos that threat actors frequently exploit, which helps ensure a hardened security posture regardless of where the employee chooses to work.

AI-powered data security investigations

When a potential breach or risky activity is detected, the window for response is incredibly narrow. The Microsoft Purview Data Security Investigations solution leverages Generative AI to transform the way analysts triage and remediate threats. Crucially, this is not a standalone tool, its integration with Microsoft Purview Insider Risk Management and Microsoft Defender XDR provides a holistic view of the ecosystem.

Analysts can now navigate vast datasets using three primary AI capabilities:

- Vector Search: This moves beyond basic keywords to understand intent. An analyst can find intent based risks, such as a user searching for ways to bypass encryption, even if the specific keywords aren’t present.

- Categorisation: AI automatically sorts data by risk level and subject matter, allowing teams to prioritise high risk assets immediately during a breach.

- Examination: This uncovers risks buried in data, such as compromised credentials or evidence of threat actor discussions, which would take human analysts days to uncover manually.

This is particularly vital in “Risky Insider” scenarios, such as when a user shares files with external storage. The AI helps distinguish between a genuine threat and accidental misuse with unprecedented speed.

Preventing oversharing with proactive AI data governance

One of the most significant risks in AI deployment is internal oversharing. If your internal permissions are lax, for instance, folders shared with “Everyone except external users”, the AI assistant will faithfully surface that sensitive information to anyone who asks, regardless of their actual need to know.

Effective DSPM requires rigorous permission hygiene. Purview identifies sites with broad access, allowing you to tighten controls before AI is fully deployed. Our recommendation for a successful deployment path is to start in discovery mode – use the Purview AI Hub to gain visibility before moving to active blocking and you can refine your policies and avoid label fatigue (the phenomenon where users become overwhelmed by security prompts and begin to ignore them.) Proactive governance ensures that the AI only amplifies your intelligence, not your risks.

Preparing for agentic security

The cybersecurity horizon is moving toward Agentic security. We are transitioning from a world of manual oversight to an era where AI driven security agents operate autonomously to predict, detect, and remediate risks before they manifest as breaches. By leveraging the best of artificial intelligence to secure the AI frontier, your organisation can maintain its competitive edge without sacrificing its most valuable asset: its data.

As you look at your current roadmap, ask yourself: is my organisation’s security posture built for the era of the agent, or are we still relying on the perimeter of the past?

Implementing Microsoft Purview DSPM for AI security

Successfully navigating the complexities of Microsoft Purview and DSPM for AI requires more than just tools – it requires a strategic partner, like Cloud Direct. We can help you explore the implementation of these advanced security features and architect a visionary data governance strategy. Our data and AI readiness assessments will define precise next steps your organisation needs to take to ensure your transition to the AI frontier is both safe and cost effective.

Discover, prioritise and protect your data with Cloud Direct’s Microsoft Purview DSPM & DLP Accelerator.

The concept of the Agent Ready Enterprise is more than just a buzzword; it represents a fundamental shift in how businesses operate. As we move beyond simple chatbots into an era of autonomous AI agents, your underlying infrastructure becomes your most critical asset.

What is an Agent Ready Enterprise?

An Agent Ready Enterprise is an organisation that has modernised its digital foundation to fully support and secure AI-driven automation. While legacy systems often act as bottlenecks, a modern environment allows AI agents to interact seamlessly with your data and applications to drive productivity.

- Infrastructure Maturity: Moving away from rigid, on-premises hardware to elastic, cloud-native services.

- Data Accessibility: Ensuring business data is hosted in a way that AI tools can access it securely without compromising privacy.

- Automation Integration: Having the ability to deploy Agentic workflows that can handle routine admin, scheduling, or data analysis.

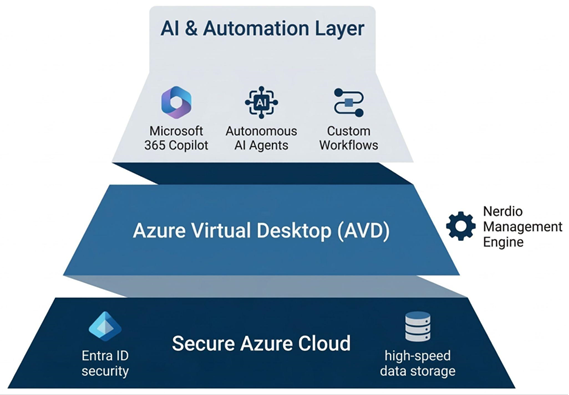

How the foundation stack powers your business: To transition to an AI-led model, your infrastructure must be viewed as a cohesive stack where each layer supports the one above it, as shown here:

- The Secure Azure Cloud (Foundation): Everything starts with your data. By hosting your environment in Azure, you benefit from enterprise-grade security with Entra ID and high-speed storage, ensuring your AI agents have a secure, high-performance home to operate within.

- Azure Virtual Desktop (Delivery): This is the engine room where your employees interact with their tools. To prevent this layer from becoming a cost-sink, Nerdio Manager runs invisibly in the background, using automation to ensure the environment is always right-sized and cost-optimised.

- AI & Automation (Innovation): With the foundation and delivery layers stabilised, you can safely deploy Microsoft 365 Copilot, autonomous agents, and custom workflows. This is where the real business value is unlocked, as these tools can now securely access your data through the Azure Virtual Desktop (AVD) interface.

How does Azure Virtual Desktop support my AI journey?

Transitioning to AVD is the first step in aligning your workspace with the Microsoft AI ecosystem. Because AVD is a native service, it places your users and their tools in the exact same environment where Microsoft’s most advanced AI models live.

Can I access Copilot for Azure through AVD?

While AVD provides the virtualised interface, its greatest strength is its proximity to Microsoft’s broader AI tools. When your desktop runs in Azure, your IT team can use Copilot for Azure to manage infrastructure more effectively, while your employees benefit from a workspace designed to integrate with Microsoft 365 Copilot. This proximity reduces latency and ensures that AI-driven features perform reliably across the global network.

How does AVD ensure data sovereignty for AI?

Security is the biggest hurdle for AI adoption. By hosting your workspace in Azure, your business data remains within a protected, compliant boundary. When you deploy AI agents, they interact with your data inside your secure tenant rather than sending information out to public models. This ensures that your intellectual property and customer data remain under your control at all times.

Why is AVD considered a future-proof platform compared to legacy VDI?

Legacy VDI solutions were built for a different era of computing. While they have adapted over time, they often remain bolt-on solutions to the cloud, whereas AVD is built directly into the fabric of Azure.

Does Microsoft innovate faster than legacy VDI providers?

Microsoft operates on a rapid feature release cycle that third-party providers like Citrix or Horizon often struggle to match. New Windows features, security enhancements, and AI integrations are developed for the native Azure environment first. By choosing AVD, you avoid the innovation lag where you have to wait months for a third-party provider to release a compatible update for the latest version of Windows.

Is AVD better integrated with the Microsoft stack?

The synergy between AVD and the rest of the Microsoft Cloud—including Entra ID (formerly Azure AD), Intune, and Microsoft 365—is unmatched. This deep integration means that as Microsoft rolls out the next generation of Agentic features in Windows, your AVD environment is ready to support them from day one. You aren’t managing a complex web of third-party plugins; you are using a unified stack designed to work together.

| Legacy VDI (Citrix/Horizon) | Agent Ready AVD | |

| AI Integration | Limited by third-party middleware | Native access to Azure AI ecosystem |

| Update Velocity | Dependent on vendor compatibility | Immediate access to Windows updates |

| Data Boundary | Often spans multiple platforms | Unified within Microsoft Azure |

| Future Readiness | Focus on maintenance | Focus on innovation and AI |

How does Nerdio use AI to optimise cloud costs?

One of the primary benefits of an Agent Ready Enterprise is the ability to use automation to eliminate waste. This is where Nerdio acts as the powerful engine behind your AVD environment, using intelligent algorithms to manage your resources.

Nerdio Manager Copilot is an in-console AI tool with two capabilities, both powered by AI. It augments a LLM with Nerdio knowledge articles to answer admin queries and generate PowerShell scripts for Azure and Windows automation.

Nerdio Advisor analyses environments for cost and performance, recommending VM sizing, scheduling changes, and comparing AVD and Windows 365 costs, licences, and Cloud PC sizing before any resources are committed.

How does predictive auto-scaling reduce my bill?

Nerdio doesn’t just react to user logins; it uses intelligent patterns to predict when your team will start their day. AI-Powered Auto-scale Recommendations, introduced in version 6.5, analyse the deployment continuously and surface proactive configuration suggestions for efficiency, operating alongside rather than replacing the native AVD rule-based scaling engine.

Can AI-driven automation handle backend tasks?

Nerdio provides agentless automation through Scripted Action Groups and Scripted Sequences. Scripted Actions use Windows Scripts and Azure Runbooks to automate VM and Azure tasks, supporting scheduling, bulk execution, and creation of reusable action groups. Scripted Sequences deliver graphical, ordered task orchestration for Intune‑enrolled devices, including Windows 365 Cloud PCs, offering SCCM‑style task sequencing and step-by-step execution logs rather than the binary pass or fail status native Intune provides.

Why is an Azure Expert MSP essential for becoming Agent Ready?

The journey to an Agent Ready Enterprise involves more than just a technical migration; it requires a shift in strategy. Cloud Direct acts as your trusted guide, ensuring that your transition to AVD serves as a springboard for future growth.

How does Cloud Direct help me navigate the AI landscape?

Your journey toward a more automated future requires a strategic partner who understands the full lifecycle of cloud transformation. Cloud Direct supports you at every milestone of this evolution:

- Architecting the Transition: We manage the move from legacy VDI providers to Azure Virtual Desktop, ensuring your new environment is built on best practices for performance and cost-efficiency from day one.

- Building the Foundation: Once you are in the cloud, we focus on infrastructure maturity. This involves securing your data silos and refining your governance models so that your systems are technically primed to host intelligent, autonomous tools, preventing costly false starts.

- Identifying High-Impact AI: Rather than a one-size-fits-all approach, we act as your innovation consultants. We help you move beyond the hype to pinpoint exactly where AI Agents and Copilot integrations will solve your specific business challenges and accelerate your organisational growth.

How does Nerdio future-proof your investment?

By choosing AVD and Nerdio, your business is no longer stuck on a legacy platform. Instead, you are on a path of continuous improvement, supported by a platform that evolves as fast as the cloud itself.

- Co-Innovation & Early Access: Nerdio operates as more than just a vendor; they are a strategic co-engineering partner to Microsoft. Led by the former visionary behind Windows 365 and AVD, Nerdio receives early access to private previews of Azure features, often influencing the very APIs that power the platform. Their roadmap is uniquely customer-led, featuring a community-voting system where users directly shape the product, ensuring that the engine you rely on is built to solve your real-world challenges before they even reach the wider market.

- Scalable Innovation: As Azure introduces new Agentic capabilities, Nerdio provides the management layer you need to deploy them at scale across your entire workforce.

- Long-Term ROI: The combination of Cloud Direct’s strategy and Nerdio’s automation ensures that your IT environment is not just a cost centre, but a resilient platform for future innovation.

Transitioning from Citrix or Horizon to AVD is the definitive move for any business looking to lead in the age of AI. It removes the architectural weight of the past and replaces it with a secure, automated, and truly Agent Ready digital workspace.

Are you ready to build your foundation for the future?

Contact us below to talk to an expert about your AVD journey.

For nonprofits, data is deeply personal. It represents donors who place their trust in you, beneficiaries whose circumstances must be protected, and partners and regulators who expect the highest standards of care. As organisations explore AI tools like Microsoft Copilot, that responsibility intensifies.

AI’s real risk lies in what it can access.

AI exposes what you don’t know about your data

Most non-profits believe they have a reasonable grip on their data estate. In reality, governance gaps are common: legacy SharePoint sites, over‑shared folders, archived spreadsheets, and permissions that have been inherited rather than intentionally designed.

Microsoft Purview is designed to surface those “unknown unknowns”.

By automatically mapping data across Microsoft 365 and connected systems, Purview gives organisations a clear view of:

- What data exists

- Where sensitive information lives

- How it’s classified, shared, and retained

This visibility is often the first breakthrough. It allows organisations to move from assumption to understanding.

Building strong data governance without slowing teams down

Governance is often seen as restrictive, but when implemented well, it does the opposite. Microsoft Purview creates a consistent, automated governance layer that supports both security and productivity.

Through sensitivity labels, retention policies, and data loss prevention, Microsoft Purview ensures protection travels with the data itself, wherever it’s stored or shared. This gives teams the confidence to use information appropriately, rather than relying on workarounds like password‑protected files or manual checks.

For leadership teams and trustees, Purview also provides something increasingly essential: demonstrable evidence of due care. As expectations rise around GDPR enforcement and emerging AI standards, informal data practices are no longer enough.

Why Purview must come before AI tools like Copilot

Microsoft Copilot is remarkably effective at surfacing information. That’s precisely why strong data governance is a prerequisite.

Copilot respects user permissions, but many organisations suffer from permission sprawl. Files innocently set to “Everyone except external users” years ago can suddenly become highly visible the moment AI is introduced.

Purview provides the context Copilot needs. By ensuring data is properly classified and protected, organisations can confidently deploy AI knowing insights are drawn from trusted, well‑governed sources.

A practical path to adoption

Adoption of Microsoft Purview usually follows either a Purview-first or use-case-led approach. Introducing Purview-first, supports the overall governance and visibility across your entire data estate. The drawback is this approach requires greater upfront investment ahead of business outcomes being realised. A use-case-led approach can be utilised to gain easier buy-in from senior leadership as it involves integrating adoption with a prioritised AI project.

Our guide explores these approaches, and provides you with an easy to follow roadmap for Purview implementation.

Download the guide

If your organisation is exploring AI and wants a clearer understanding of how Microsoft Purview underpins safe, responsible innovation, our guide dives deeper into:

- Why Purview is foundational to AI readiness

- Common data risks nonprofits underestimate

- How Purview and Copilot work together

- A phased, realistic roadmap to implementation

Employee use of AI agents is inevitable. But for those in senior IT roles, the challenge is not whether to adopt AI, but how to do it safely and effectively. We examine the key issues and show how a well-structured assessment can accelerate AI readiness.

Do you see the strategic importance of AI, feel pressurised to ‘do something’, but remain unsure how to operationalise it safely and at scale? If so, you’re not alone.

Although IT hesitancy reflects a real and valid awareness of the risks and inherent ambiguities, the pressures to act will only increase.

Not a normal rollout

Part of the challenge is that AI isn’t just another technology rollout – in many ways it’s different:

- Employees can access and synthesise vast amounts of organisational data

- Non-developers can create their own automations and agents

- Outputs are dynamic, not deterministic, and

- Value comes from thousands of small improvements rather than one big system.

This changes the way we need to govern and manage the software, as well as the way employees use it – increasingly they will create and delegate work to it.

Why Agentic AI is daunting

Talking to IT leaders the same concerns come up repeatedly: data risks, governance needs, unclear readiness, and pressure to act.

There are several very real areas of uncertainty that can easily become barriers to action.

Security and data risks

Tools like Microsoft 365 Copilot have the capability to surface organisational data in new ways, raising questions around data accessibility and visibility:

- What can Copilot access?

- Will sensitive data leak?

- Is our permissions model strong enough?

Governance shortcomings

Unlike traditional IT systems, agentic AI brings with it non-deterministic outputs, employee-built agents, and dynamic workflows. This is prompting IT leaders to ask:

- Who owns these agents?

- What’s allowed and what’s not?

- How do we audit decisions?

Most existing governance models haven’t anticipated these sorts of questions.

The shift to ‘citizen AI development’

Tools like Microsoft Copilot Studio allow non-developers to build agents. While this creates huge opportunity, it lessens central control and it raises questions around how this should be handled.

Some have likened it to the early anxiety around Power Platform adoption, but this comparison risks underplaying the potentially far greater consequences.

Who is responsible for AI?

While AI technologies typically fall under IT’s remit, AI as a strategic initiative may not. Should it be driven by Digital, by the business, or shared? If so, how should ownership be structured? How should adoption be scaled?

ROI is hard to quantify

Where budgets are linked to ROI, at least initially, the returns can be hard to quantify. The benefits are likely to be widely dispersed, gains are incremental, and the real value comes from many small improvements.

With so many unanswered questions and no clear starting point, there’s a danger of inactivity.

Gaining the confidence to press ‘Go’

For many, the fundamental problem isn’t one of technology. Most organisations already have the foundations in place with Microsoft 365, SharePoint and Teams data, identity and access controls, and sound security and governance. While these may need building on, it’s not the technology that’s holding IT back: it’s a lack of clarity:

- Where are the risks?

- What is needed to safely proceed?

- What needs fixing first?

- How do we justify investment and timing?

This is where a structured readiness assessment matters.

How a Microsoft 365 Copilot Readiness Assessment will help

A well-designed Copilot readiness assessment will provide clarity and move you from uncertainty to informed action.

Done properly, it will deliver five critical outcomes:

- Reduced adoption risk

By identifying where data exposure, access controls or governance gaps exist, these can be proactively addressed.

- An objective, documented view of readiness

It provides an evidence-based assessment of Copilot readiness, to enable confident go/no-go or phased rollout decisions. It will also help to combat ill-considered demands to ‘just do it’.

- Prioritised, actionable recommendations

Not everything needs fixing, or fixing now. The assessment focuses effort on the areas that will have the greatest impact on risk reduction and value realisation.

- Alignment of IT, security, and the business

Through structured discovery and analysis, it creates a shared understanding, reducing stakeholder friction and accelerating decision-making.

- Clarity without an open-ended commitment

Delivered as a fixed-price, time-bound engagement with clear governance and sign-off, it gives meaningful outcomes without launching a large, undefined programme.

The risks of delay

A recent MIT report reveals that over 90% of employees are already using gateway AI tools like ChatGPT. Although only 40% of companies had purchased licenses.

Increasingly, employees will have friends that are progressing beyond this to use generative AI to improve their work.

The implication is clear: if you don’t provide approved tools, guidance, and safe environments, employees will explore external AI tools and build unmanaged solutions.

It’s critical that the organisation ensures AI use happens in the right way.

Regaining the initiative

Successful organisations aren’t treating AI as a single project, but as the introduction of a capability to be progressively developed and scaled.

That starts with:

- Understanding your current state

- Developing a clear view of what needs to be addressed

- Enabling early use cases, and

- Building confidence across the organisation.

A structured readiness assessment doesn’t just tell you where you are — it gives you a defensible, practical path forward.

And right now, that’s exactly what many IT leaders need.

Learn more about Cloud Direct’s Microsoft 365 Copilot Readiness Assessment.

Request a call with one of our experts using the form below, and find out how we can remove AI uncertainty and provide you with a clear route to adoption.

Written by Jonathan Moore, Azure Architect at Cloud Direct.

In our previous post, we explored why modern businesses are moving away from the “complexity tax” of legacy VDI providers. Once you understand the cost and performance benefits of a cloud-native stack, the next logical question is: how do you actually get there without disrupting your daily operations?

Transitioning from Citrix or Omnissa Horizon to Azure Virtual Desktop (AVD) is not a simple lift-and-shift migration. Instead, it is a service replacement. This means we aren’t just moving old problems to a new cloud; we are replacing a legacy way of working with a modern, high-performance platform. This shift serves as the critical bridge to becoming an Agent Ready Enterprise, where your infrastructure is primed to support the next generation of AI-driven automation.

What does a full production rollout of Azure Virtual Desktop look like?

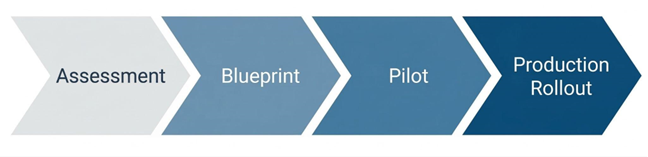

A successful move to AVD requires a structured approach that prioritises user experience and data integrity. By following a proven framework, you can decommission your legacy hardware with total confidence.

- Environment Assessment: Before a single file is moved, we conduct a comprehensive audit of your existing Citrix or Horizon setup. This identifies which applications are being used, how much processing power your users actually need, and where your data currently lives.

- The Blueprint Phase: Cloud Direct Architects will design your Azure Landing Zone. This is the foundation of your new environment, ensuring that networking, security, and identity management (via Microsoft Entra ID) are configured to enterprise standards from the start.

- Pilot Validation: We don’t move everyone at once. We start with a Pilot group—a subset of users who test the new environment. This allows us to ensure application parity, meaning your team can do everything they did before, only faster and more securely.

- Full Production Rollout: Once validated, we execute a structured replacement. Users are transitioned to the AVD platform in phases, ensuring that support teams are available to help with any day-one questions, resulting in minimal friction for the workforce.

How does Cloud Direct guide the rollout process?

Moving your entire desktop infrastructure can feel like a daunting technical hurdle. Cloud Direct acts as your strategic partner, translating complex Azure requirements into clear business outcomes.

What is a Service Replacement Strategy?

Rather than treating this as a one-off IT project, Cloud Direct manages the transition as a professional service. We focus on mitigating risk by ensuring your legacy environment and your new AVD environment can coexist during the transition. This ‘parallel running’ ensures that if any issues arise, your team remains productive on the old system while we fine-tune the new one.

How do you handle Application Modernisation?

One of the biggest hurdles in any VDI move is legacy software. Our experts advise on whether to virtualise these apps using modern tools like App Attach or transition them to cloud-native, SaaS alternatives. This ensures your new environment isn’t cluttered with outdated delivery methods.

Is there funding available for this transition?

As an Azure Expert MSP, Cloud Direct can help you navigate various Microsoft investment programmes. These initiatives are often available to help offset the initial costs of assessment and migration, making the move to AVD even more financially attractive for your business.

How does Nerdio simplify the rollout and management?

If Azure is the engine, Nerdio is the sophisticated dashboard that allows us to drive it efficiently. Cloud Direct leverages Nerdio Manager to automate the technical heavy lifting that usually makes VDI migrations slow and expensive.

- Rapid Image Management: In legacy systems, updating a Golden Image (the template for your desktops) could take hours. With Nerdio, Cloud Direct can create, test, and deploy updated desktop templates across your entire company with just a few clicks.

- User Profile Resilience: We use Nerdio to manage FSLogix, the technology that handles user profiles. This ensures that when a user logs in, their files, settings, and Outlook signatures are there instantly, regardless of which virtual machine they are assigned to.

- Direct Automated Deployment: Instead of building your environment piece by piece, Cloud Direct uses Nerdio to deploy fully functional host pools and session hosts directly into your Azure subscription. This automation eliminates human error and ensures your infrastructure is ready in days, not months.[H2] How does Nerdio enhance operational efficiency?

Once the rollout is complete, the focus shifts from getting it live to keeping it perfect. Nerdio provides Self-Healing capabilities that proactively maintain the health of your virtual desktop environment.

Can the system fix itself?

Nerdio’s automated troubleshooting can detect common session errors—like a hung desktop or a connectivity glitch—and resolve them automatically without a human ever needing to raise a support ticket. This Self-Healing approach drastically reduces downtime.

How do we monitor performance?

Through the Nerdio interface, Cloud Direct gains real-time insights into the actual user experience. We can see if a specific department is experiencing lag or if an application is consuming too many resources, allowing us to proactively tune the environment before your employees even notice a problem.

| Feature | Legacy VDI (Citrix/Horizon) | AVD with Nerdio & Cloud Direct |

| Updates | Manual, time-consuming patching | Native automated image updates or through integration with Intune image management |

| Scaling | Rigid; hardware must be always-on | Intelligent Auto-scaling (Pay-as-you-use) |

| Support | Reactive; wait for things to break | Proactive; Self-Healing & 24/7 Monitoring |

| Onboarding | Can take days for new hardware | Near-instant virtual provisioning |

How does Cloud Direct ensure long-term success?

The launch date is just the beginning of our partnership. Cloud Direct provides a continuous optimisation service to ensure your investment stays secure and performant as your business evolves.

Why is 24/7 Managed Excellence important?

Technology doesn’t sleep, and neither does our support. Cloud Direct can provide 24/7 managed support to ensure business continuity. Whether it’s a security patch that needs applying at 2 AM or a scaling adjustment for a new global team, our experts are always on hand.

What is a Strategic IT Roadmap?

The cloud moves fast. We don’t just set and forget your AVD environment. We meet with you regularly to discuss how new Azure features—particularly those involving AI and automation—can be integrated into your workspace. Then, we ensure your IT environment isn’t just a cost centre, but a platform for future growth.

What’s Next

Find out more about how you could transition to AVD and if it’s the right decision for you by signing up to our upcoming webinar “Modernising VDI: The Practical Path to AVD With Nerdio & Cloud Direct” at 10:00 am on the 29th of April 2026.

AI agents are quickly becoming the digital teammates we never knew we needed. If you’re not sure where to get started, we’ve put together this A–Z Agent Guide to spark your inspiration and show just how versatile agents can be across roles, teams, and industries. From practical, high impact helpers to your personal agony aunts, we’ve got it all covered.

You haven’t begun using agents? No problem, we have recently written a blog on how to build your own. It includes key considerations to ensure you are building agents that are not only increasing your productivity, but that are compliant and grounded within your organisation’s policies.

Whether you’re just starting your AI journey or looking to scale your agent strategy, use this guide to discover what’s possible.

The AI Agent A-Z Index

A – Analyst Agent

Your always in-the-know agent. The Analyst Agent can pull data from across your systems, spot patterns, and serve up clear summaries so you can make decisions based on evidence, not instinct alone. This is a great one to have on hand when a senior leader asks for your latest project results.

B – Branding Agent

The guardian of your brand. Whether you want to ensure your personal brand stays consistent or get through company brand reviews with less feedback, this agent reviews copy and visuals for tone and style, suggesting tweaks so every asset is aligned.

C – Career Coach Agent

Your personal development partner. It can help you map skills to roles, suggest training paths, and provide tailored guidance to help you excel. Use this agent when preparing for performance reviews and potentially secure your next promotion.

D – Data Visualiser Agent

The spreadsheet specialist sidekick. It turns complex datasets into intuitive charts, dashboards, and infographics that stakeholders can understand at a glance.

E – Executive Summary Agent

The TL;DR star. This agent turns long reports, meeting notes, or research papers into concise, high‑impact summaries tailored for decision‑makers. Whether you would like to gain insights from an industry report in a hurry or need to condense information for a company presentation, this agent has your back.

F – Financial Forecaster Agent

Your numbers navigator. This agent builds and updates financial models, forecasts revenue and costs, and highlights variances so finance and business leaders stay aligned.

G – Governance Agent

The rules-and-guardrails specialist. It monitors how agents and tools are being used, checks activity against your policies, and can alert you when something looks offside.

H – HR Management Agent

A digital HR partner for employees and managers. It can answer policy questions, help you find useful employee documents, and guide managers through tricky employee processes.

I – Inventory Management Agent

Your real-time stock scout. It tracks inventory levels, predicts reorder points, and can even draft purchase orders to prevent stockouts or over-ordering. If you manage the merchandise cupboard or employee devices, this agent will give you the availability lowdown in an instant.

J – Justification Agent

Your persuasion partner. When you’re preparing business cases, this agent pulls relevant data, benchmarks, and risks into a clear rationale you can use to impress and quickly gain buy-in from business leaders. You will never go into a meeting unprepared again.

K – Keyword Agent

Your SEO and search whisperer. It generates keyword lists, clusters terms by intent, and suggests optimised copy so your content gets found at the right time by the right people.

L – Language Agent

A multilingual wordsmith. It translates, localises, and rephrases content while keeping your tone of voice consistent across regions and audiences. Your content will never get lost in translation with this agent.

M – Market Reporter Agent

Your always-on market analyst. It scans news, reports, and competitor activity, then summarises implications so you stay ahead of shifts in your industry and sector.

N – Negotiation Coach Agent

A pocket-size negotiation trainer. It helps you plan negotiation strategies, role-play conversations, and suggest talking points and trade-offs before you step into the room.

O – Onboarding Agent

The friendly first-week buddy. The HR or recruiting team can create this agent to help ensure new starters have everything they need to succeed from the beginning. It can walk new employees through key tools, people, and processes, answering common questions.

P – Prompt Coach Agent

Your prompt architect. It teaches you and your teams how to ask better questions and refine prompts, so every agent you use understands you better and performs better. It can also save you time as you’ll receive a more appropriate response faster, rather than trying to get the right response with multiple prompts.

Q – Quality Control Agent

The detail checker. If you’ve got an important presentation coming up and need to ensure no faults can be picked up, this is the agent for you. It reviews content, data, and documents for accuracy, consistency, and compliance with your standards making sure nothing slips.

R – Researcher Agent

A tireless desk researcher. It gathers information from internal and external sources, organises it into structured notes, and highlights key insights and gaps. The good news for Microsoft 365 Copilot users, is the Copilot Research Agent is already configured and ready to use.

S – Survey Agent

Your feedback collector. It designs surveys, suggests questions, analyses responses, and summarises sentiment so you can quickly act on what customers or employees are telling you.

T – Trend Spotter Agent

Your opportunity scout. What’s in vogue? This is the agent that knows. It monitors behaviours, content, and performance over time to spot emerging trends. This can help you get ahead by moving from reactive to proactive.

U – UX Agent

Your user-experience expert. It reviews online journeys, suggests improvements, and summarises user feedback to help teams design smoother, more intuitive experiences for both current customers and new prospects.

V – Vendor Directory Agent

The supplier-savvy one. It maintains an up-to-date view of vendors, contracts, and performance metrics, and can recommend the best-fit supplier for any given need.

W – Writer Agent

Your copy partner. From emails and social posts to proposals and blog drafts, it helps you get from blank page to first draft in minutes. Top tip: when setting up this agent make sure to provide it with a tone of voice using previous materials you have created so that it sounds more like you.

X – Xtra Pair of Hands Agent

A catch-all helper for the everyday grind. From filing notes and summarising meetings to chasing actions and tidying documents, this agent picks up the small tasks that eat up your day. It can even just be a sounding board for when you’re unsure. If there is one agent to rely on, this would be it.

Y – Year-in-Review Agent

Your annual storyteller. It pulls together performance metrics, milestones, customer quotes, and highlights into polished “year in review” summaries for leadership, board, or all-hands meetings.

Z – Zero-Inbox Agent

The inbox declutterer. It categorises, summarises, and drafts replies so you can tame email overload and get closer to that mythical “Inbox Zero”.

In Summary

Hopefully this A–Z Agent Guide has sparked your imagination and shown just how many ways AI agents can support and elevate the work you do. We hope this has inspired your thinking about agents, but don’t feel like you need to use them all at once. Often, just one thoughtfully chosen agent can make a meaningful difference across a team or workflow.

Ready to take the next step?

If you’d like to understand how to implement AI agents effectively across your organisation, from ensuring you have the right data infrastructure in place to identifying high‑value use cases, our experts are here to help. Reach out to the team using the form below.

Or to find out more about our AI agent offering, check out our Copilot Landing Zone Accelerator.

Written by Jonathan Moore, Azure Architect at Cloud Direct.

The world of remote work has changed significantly, and many businesses are finding that the virtual desktop solutions they relied on for years are no longer keeping pace. If you’re currently managing a legacy environment like Citrix or Horizon, you may be facing rising costs and increasing complexity. Switching to Azure Virtual Desktop (AVD) is often the most direct path to modernising your infrastructure while reducing overheads.

Why should my business consider moving from Citrix and Horizon to Azure Virtual Desktop?

For many organisations, the decision to move is driven by the need for a more agile, cost-effective platform. Azure Virtual Desktop is built specifically for the cloud, which offers several distinct advantages over traditional VDI (Virtual Desktop Infrastructure) providers.

- Cost Efficiency: Legacy Citrix and Horizon environments often require upfront investment in hardware or complex per-user licensing. AVD operates on a flexible, consumption-based model. You only pay for the cloud resources you actually use, which can significantly lower your total cost of ownership (TCO).

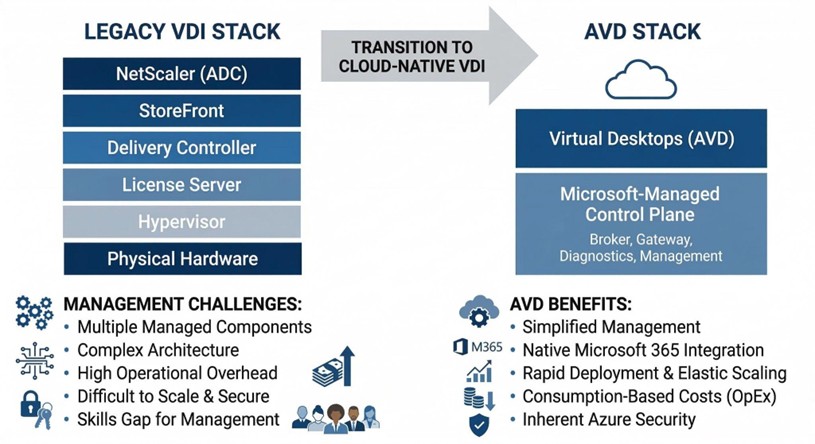

- Reduced Infrastructure Complexity: Legacy VDI typically requires a multi-layered stack, including NetScalers, StoreFronts, and Delivery Controllers. AVD simplifies this by providing a Microsoft-managed control plane. This means fewer components for your IT team to maintain, patch, and secure.

- The Best Microsoft 365 Experience: Because AVD is a native Microsoft service, it is uniquely optimised for applications like Teams and Outlook. Beyond performance, there is a major licensing advantage: most businesses already using Microsoft 365 (such as Business Premium or E3/E5 plans) already own the rights to run Windows 10 or 11 Enterprise. By switching to AVD, you can utilise “Windows 10/11 Multi-session” technology. This allows multiple users to share a single virtual machine simultaneously, drastically reducing your cloud footprint and eliminating the need for additional, expensive VDI or Remote Desktop Services (RDS) licenses.

While the cost and performance benefits are clear, the most significant shift is the reduction in architectural weight. Moving to AVD allows you to shed the burden of maintaining complex middleware and proprietary hardware layers.

The following diagram illustrates how transitioning to a cloud-native stack removes the “complexity tax” associated with legacy VDI, shifting the maintenance of the control plane entirely to Microsoft.

By collapsing these layers, your IT resources are freed from the cycle of patching and managing infrastructure, allowing them to focus on the desktops and applications that actually drive your business.

How does Microsoft Intune improve device management in an AVD environment?

Managing a workforce that uses both physical laptops and virtual desktops can often lead to management silos. Microsoft Intune bridges this gap, allowing you to manage every endpoint from a single, unified interface.

- Unified Endpoint Management: Instead of using different tools for physical and virtual machines, your IT team can deploy policies, applications, and security updates to everything at once. This consistency reduces the risk of human error and ensures a uniform experience for your employees.

- Zero Trust Security: Intune allows you to implement Conditional Access. This means the system checks the health of the device and the identity of the user before allowing them into the virtual desktop. If a device is unpatched or a login looks suspicious, access is blocked automatically.

- Simplified Policy Deployment: You can move away from complex, on-premises Group Policies (GPOs) and use modern, cloud-based configurations. This makes it much faster to onboard new employees or update security settings across the entire company.

To help you visualise the shift in management philosophy, the table below compares the traditional approach used in many Citrix or Horizon environments with the modern standard provided by Microsoft Intune.

Comparison of Legacy GPO Management vs. Modern Intune Management:

| Legacy GPO Management (Citrix/On-Prem) | Modern Intune Management (AVD) | |

| Network Requirement | Requires VPN or corporate network line of sight. | Cloud-native; works anywhere with an internet connection. |

| Device Visibility | Limited; often only updates when devices are “on-net”. | Real-time visibility of both physical and virtual endpoints. |

| Security Model | Perimeter-based (Trust everything inside the network). | Zero Trust (Verify every identity and device health status). |

| Update Speed | Can be slow; dependent on replication cycles. | Instant deployment of policies and security patches. |

| User Experience | Settings can be inconsistent across different devices. | Uniform experience across laptops, mobiles, and AVD. |

This transition to Intune ensures that your IT team spends less time troubleshooting connectivity issues and more time focused on high-value projects that drive your business forward.

What are the risks of staying with legacy VDI solutions?

While it may feel safer to stick with a familiar system, remaining on legacy platforms can create long-term strategic risks for your business.

- Technological Stagnation: Third-party providers often struggle to keep up with the rapid pace of Microsoft’s cloud updates. By staying on a legacy platform, you may find that new Windows features or security enhancements aren’t available to your users until months after they’ve been released.

- The Skills Gap: Finding and retaining engineers with deep expertise in complex legacy VDI environments is becoming increasingly difficult and expensive. Conversely, Azure skills are more widespread, making it easier to find the talent needed to support your business.

- Security Debt: Every extra layer of third-party software in your stack is another potential point of failure. Maintaining legacy gateways and controllers creates a larger attack surface that requires constant, manual patching.

How do Cloud Direct and Nerdio work together to improve your AVD experience?

Moving to the cloud doesn’t mean you have to go it alone. While Azure provides the foundation, achieving the best performance requires a combination of expert strategy and powerful automation. This is where the partnership between Cloud Direct and Nerdio becomes essential.

Cloud Direct acts as your strategic architect, designing a secure Azure environment that fits your specific business needs. However, managing a high-performance virtual desktop environment at scale can be complex. To solve this, Cloud Direct uses Nerdio Manager as the optimisation engine behind the scenes. Nerdio is a specialised management platform that automates the deployment and scaling of AVD, ensuring that the system is always running at peak efficiency without your team having to manage the technical minutiae.

How does Cloud Direct help with this transition?

Cloud Direct is an Azure Expert MSP (Managed Service Provider) with a proven track record of helping businesses navigate complex transitions. We don’t just provide a technical lift and shift; we provide a comprehensive service replacement strategy.

As your trusted guide, Cloud Direct takes the time to understand your business workflows to ensure that your new environment is built for productivity from day one. Crucially, we provide 24/7 managed support. This means that your virtual environment is monitored and maintained around the clock, providing you with peace of mind that your employees will always have access to the tools they need to stay productive, regardless of where they are working.

How does Nerdio support cost efficiency?

One of the biggest concerns for any business moving to the cloud is “bill shock”—the fear that costs will spiral if virtual machines are left running unnecessarily. Cloud Direct solves this by leveraging Nerdio’s powerful automation features.

Nerdio addresses cost efficiency through several key mechanisms:

- Intelligent Auto-Scaling: Nerdio automatically detects when users log off and powers down unused virtual machines. During the evening or weekends, your Azure footprint shrinks to the bare minimum, and as your team starts work in the morning, Nerdio pre-stages the desktops so they are ready the moment they are needed.

- Storage Optimisation: Even when a machine is turned off, the storage it uses costs money. Nerdio can automatically swap expensive high-performance disks for cheaper storage when a machine is shut down, potentially saving an additional 20-30% on storage costs.

- Clear Financial Visibility: Nerdio provides granular reporting that shows exactly who is using what and how much it costs. This allows Cloud Direct to provide you with accurate forecasting and ensure you are getting the highest possible return on your investment.

By combining the strategic expertise of Cloud Direct with the automation power of Nerdio, your business can move away from the limitations of Citrix or Horizon and into a modern, secure, and highly cost-effective digital workspace.

What’s Next

Find out more about how you could transition to AVD and if it’s the right decision for you by signing up to our upcoming webinar “Modernising VDI: The Practical Path to AVD With Nerdio & Cloud Direct” at 10:00am on the 29th of April.

Written by Robin Dadswell, Principal Consultant

When I’m talking to customers one subject is coming up repeatedly. Microsoft Sentinel – and there’s a lot of confusion. Is it being retired? Is it being absorbed into Defender? Do we need new licenses? I want to explain what’s happening, when, and what it means for you.

But before we get into the detail let’s start with some clarity. Microsoft Sentinel is not being retired. Its Security Information and Event Management (SIEM) and Security Orchestration, Automation, and Response (SOAR) capabilities remain fully supported. What is changing is where and how it is managed: Sentinel is moving from the Azure portal into the Microsoft Defender portal as part of Microsoft’s broader ‘unified security operations’ strategy.

For IT teams, SOC analysts, architects and governance leads, here’s what that means both operationally and technically.

Microsoft Sentinel and Defender: in a nutshell

First a quick explanation – please skip ahead to What is and isn’t changing if you’re already familiar with Sentinel and Defender.

Microsoft Defender is Microsoft’s broad threat protection platform. It’s a family of products with each focusing on different aspects of your environment. Such as Defender for Endpoint (laptops, servers), Identity (Active Directory), Cloud (cloud workloads), Office 365 (email and collaboration), and Cloud Apps (SaaS).

Defender sits close to the asset and actively flags or blocks threats. It’s protective and preventative.

Whereas Microsoft Sentinel is a Security Information and Event Management (SIEM) and Security Orchestration, Automation, and Response (SOAR) platform. It works across your environment taking data from Microsoft Defender, firewalls, identity providers, third-party security tools, and cloud platforms (e.g. Azure, and AWS). It collects security logs, correlates signals, detects suspicious patterns, generates incidents for investigation, and automates response workflows.

What is and isn’t changing in the Sentinel move

The major change is the management experience. Sentinel will be exclusively managed through the Microsoft Defender portal – with the Azure Portal being retired.

The aim is to provide a unified security operations experience with a single pane of glass for both SIEM and Extended Detection and Response (XDR). It ensures a consistent user interface for SIEM and XDR with native, cross domain, correlation of incident timeline and evidence.

What is NOT changing:

- Sentinel’s core SIEM functionality remains

- Azure Log Analytics will remain the underlying data platform

- KQL (Kusto Query Language) analytics rules continue to operate

- Sentinel and Defender Access Controls

- Automation playbooks continue to function

- Sentinel licensing remains separate from Defender (see later).

Timelines

- New Sentinel workspaces are already, automatically onboarded to the Defender portal

- July 2026 (a date you may have heard) was the target date for retiring the Azure portal

- 31 March 2027 is the extended deadline for when Sentinel will cease to be supported in the Azure portal.

What IS changing

Underneath the surface there are some noteworthy changes affecting correlation, alert logic, and data schemas. Let’s look at each of these in a bit more detail.

Correlation is more cohesive

Historically, Sentinel has relied on KQL-driven analytics rules, scheduled queries and Fusion detection. Whereas Defender performed its own correlation within Microsoft 365 security.

In the unified Defender portal alerts from Sentinel and Defender XDR both feed into a shared incident model. Sentinel’s legacy Fusion engine is disabled as part of the move to the Defender Portal at which point correlation is processed via the Defender XDR logic, this unifies incident creation in the same manner as other Defender Alerts enabling a single logic flow for all alert generations.

What does not change:

- Custom KQL detections will still run

- Log-based analytics will remain intact

- Workspace-level data architecture remains.

What does change:

- Incident grouping logic is expected to evolve

- Alerts may appear more consolidated

- Multi-signal correlation becomes more tightly integrated.

For most, this will be an enhancement. But you should check alert tuning and correlation during transition.

Unified dynamic alerts and incidents

Previously Defender products generated alerts, which Sentinel grouped into incidents. Now, Defender XDR generates the incidents with Sentinel analytics alerts feeding into the same incident – and correlation can occur before an analyst sees the case.

In practice, this could mean that incidents are differently grouped, that alert-to-incident mapping shifts, and that there’s less noise with better cross-product stitching.

While these aren’t disruptive changes, it’s worth making some checks after transition:

- Validate incident population behaviour

- Confirm escalation workflows still align

- Review automation triggers.

Table and data schema: evolution, not revolution

For table and data schema it’s more a case of incremental alignment, than structural change.

Rest assured that Sentinel’s data backbone remains Azure Log Analytics. Neither are tables, custom logs, and KQL queries disappearing. But Microsoft is gradually harmonising schemas between Sentinel log tables, Defender Advanced Hunting, and unified incident entities. This may produce greater normalisation of entity mapping, reduced duplication across alert tables, and closer alignment between hunting queries and SIEM queries.

So, things may not be exactly as you expect. Pay close attention to what is happening and validate schema references during the transition.

Why is Microsoft doing this?

The move reflects what’s happening across the industry, with security vendors consolidating SIEM and XDR into unified SecOps platforms.

Effective threat detection is increasingly reliant on visibility of identity signals, endpoint telemetry, cloud workloads, email, network logs, and behavioural analytics.

Microsoft is aligning its security offerings to provide:

- One portal

- One incident queue

- Integrated correlation and

- Shared investigation workflows.

Strategically, it strengthens Microsoft’s position as a full-spectrum security provider, enabling organisations to utilise a single pane of glass for SecOps activities.

Licensing is still separate

One of the biggest misconceptions is that Sentinel is being folded into a new Defender licence. This is not the case:

- Sentinel’s (ingestion-based) licensing remains separate

- Defender licensing remains separate

- Portal consolidation does NOT mean licence consolidation.

However, some threat intelligence features are being folded into Defender so it’s worth reviewing licensing, especially for those with Microsoft E5/A5 subscriptions.

What you need to do to migrate Sentinel

While this isn’t an emergency migration, it would be foolish to do nothing – these are changes that need managing properly.

Recommended actions:

- Plan to transition in good time

- Check your licensing (especially if on a E5/A5 subscription)

- Review RBAC alignment between Azure and Defender

- Test incident grouping behaviour in the unified portal

- Validate custom KQL queries and workbooks

- Update documentation and runbooks.

Handled correctly, you can have a smooth transition.

Consider also, whether this is an opportunity to formally review your configuration and settings against best practice.

In short

Sentinel isn’t disappearing and Defender isn’t ‘taking over’ – Microsoft is unifying its security stack.